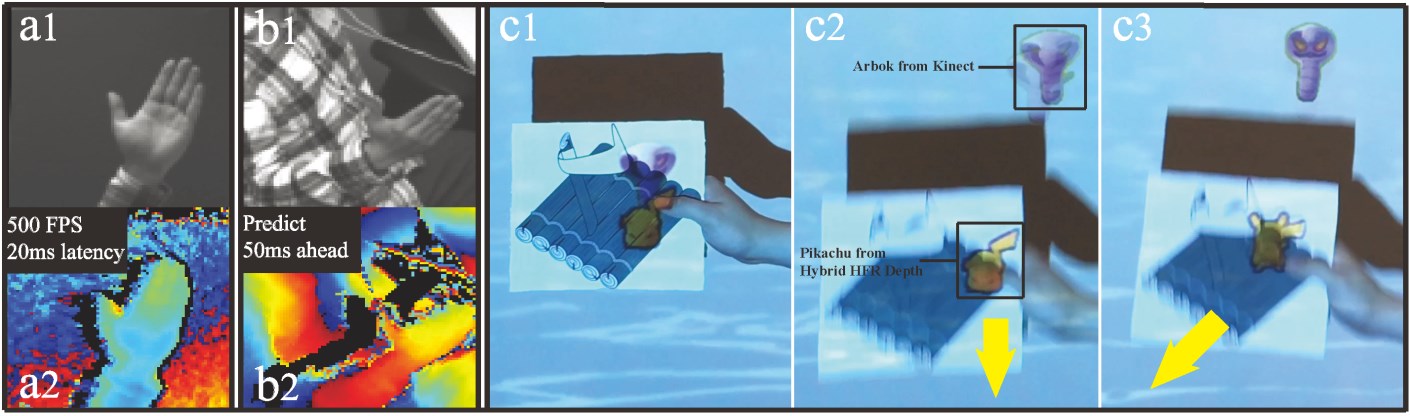

Hybrid HFR Depth: Fusing Depth and Color Cameras for High Speed, Low Latency Depth Camera Interactions

Authors: Jiajun Lu, Hrvoje Benko, Andrew D. Wilson

SIGCHI 2017

Abstract

The low frame rate and high latency of consumer depth cameras limits their use in interactive applications. We propose combining the Kinect depth camera with an ordinary color camera to synthesize a high frame rate and low latency depth image. We exploit common CMOS camera region of interest (ROI) functionality to obtain a high frame rate image over a small ROI. Motion in the ROI is computed by a fast optical flow implementation. The resulting flow field is used to extrapolate Kinect depth images to achieve high frame rate and low latency depth, and optionally predict depth to further reduce latency. Our “Hybrid HFR Depth” prototype generates useful depth images at maximum 500Hz with minimum 20ms latency. We demonstrate Hybrid HFR Depth in tracking fast moving objects, handwriting in the air, and projecting onto moving hands. Based on commonly available cameras and image processing implementations, Hybrid HFR Depth may be useful to HCI practitioners seeking to create fast, fluid depth camera-based interactions.

Downloads

Paper: Download 1.56M

Feedback

Please send email to us if you have any questions.